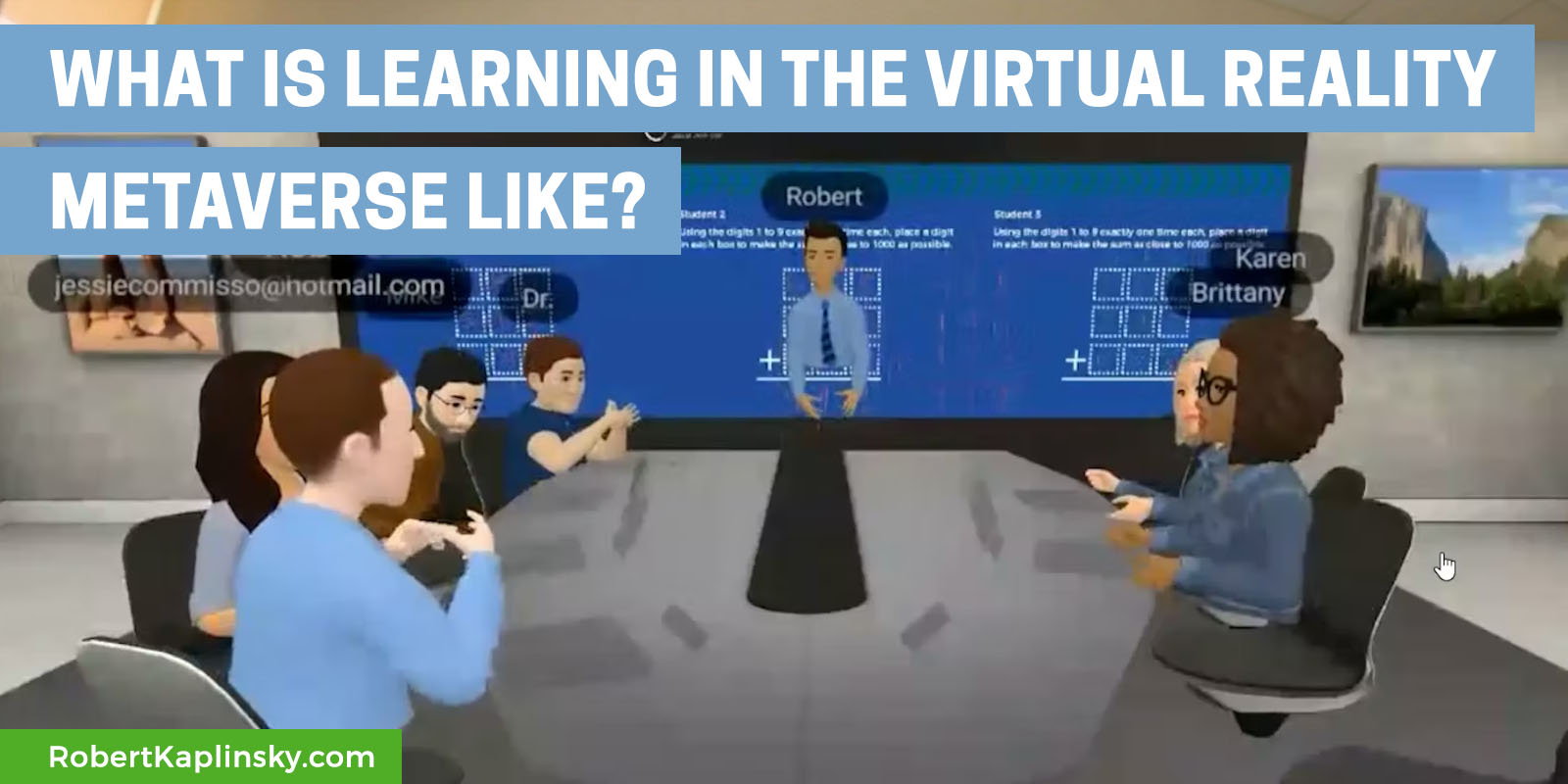

A group of math educators came together to experience what teaching math in the virtual reality metaverse might look like. We tried out two Open Middle math problems and reflected on the experience. We recorded the whole thing and I wanted to share some of the highlights so you could get a feel for what it might look like.

For those interested in the details, we were all using the Meta (formerly Oculus) Quest 2 headsets and the Horizon Workrooms app. Our experience with this app ranged from this being their first or second time to about fifteen uses (so consider how many times it took for you to feel comfortable with Zoom).

Also, in the app, the volume of people’s voices got quieter when they were farther away or when they turned away from you, just like real life. In the recording, everyone was equally loud, which made the recording harder to listen to when everyone was taking at the same time.

Explaining The First Problem

We started off by explaining

the first problem and having people work together in pairs. While students normally get individual thinking time to begin a problem, we were curious about what collaboration was like in a virtual space so we started with that.

Inviting People To Work At The Board

As we continued working on the problem, at 0:30 I asked one group to come up and work at the board.

First Reflections On Changes We'd Like To See

As we were working, we paused to share reflections on what we’d like to see improved. For example there’s not individual space to do your own writing, so everyone has to share the same limited whiteboard area.

Towards the end of the first problem, Jessie and Rob discovered that giving someone else a virtual high five or high ten causes a little explosion to occur. Then Mike and Karl (“Dr.”) started playing Rock, Paper, Scissors. Going forward, I think we’ll start every training by doing high fives and rock, paper, scissors. They’re fun ways to increase engagement and get people familiar with what’s possible.

Reflections On The Importance Of Communication

In real life, partners can usually see what each other is writing. However that’s not the case in this particular app. So, Jessie and Rob reflect on how they learned that they needed to describe what they were doing while they were working.

Body Language Is Different

The Quest 2 headset cannot read facial expressions such as raising eye brows, opening or closing your eyes, or the way you move your lips. Accordingly, it can look like you’re just staring at someone rather than being expressive. So, I’ve learned to nod my head a lot to show that I’m listening as that is one of the expressions that does come across well in virtual reality.

Harder To Tune People Out

In this app, there is currently no way to mute other people. If there was a way to do something like breakout rooms where you could only hear the people in your group, it’d be less distracting.

Explaining The Second Problem

Then we started working on

the second problem, again in pairs.

Trying Multiple Groups At The Board

For this problem we tried having Karen and Brittany up at the board with Karl (“Dr.”) and Mike (who had to leave a few moments earlier) while Jessie and Rob stayed at the table. While the video captured all voices at equal volumes, in the room, conversations were easier because people who were farther away were quieter.

Debriefing The Second Problem

Here’s what it was like to talk about the second problem with people all around the virtual classroom.

Here, Karl (“Dr.”) explains his strategy for solving the second problem.

Frequently Asked Questions

Most motion based virtual reality games (and every single virtual reality ride like Star Tours) makes me extremely nauseous these days. Fortunately, this doesn’t make me feel that way at all.

How’s the battery life?

The battery life is still not that great and the headset lasts around two hours. The controllers last a very long time though.

What else should I know?

Wearing the headset also really messes up your hair. It gives you something of an inverted mohawk. I don’t wear glasses with it but you need to insert a provided adapter to comfortably wear it.

Here’s my growing wish list of features I would find very useful that don’t currently exist:

Virtual Manipulatives

It would be amazing if we could import virtual tools to use in this space. Imagine base-10 blocks, shapes, number tiles, or even a calculator. I imagine this will come one day, but it’s not here yet.

Individual Desk Whiteboards

Currently everyone has access to a shared whiteboard. So everyone is working on the same writing space at the same time. That’s great for a meeting and less great for a classroom. It’d be even better if each student could have their own whiteboard and then the facilitator could select the one to display and share.

Viewing Other People’s Whiteboards

Building on the last idea, I wish there was a way to see what other people were doing with their whiteboard. I totally get that it’s most likely related to privacy concerns, but as a teacher I’d love to be able to walk around the virtual space and see what students are doing. That currently is not possible unless everyone is working on the same whiteboard at the same time. Then you’d have to partition it into sections so each person could work in their same area.

Make It Easier To Record A Room View

Right now there’s no easy way to record the whole room. I had to join the meeting as a computer based participant, make the screen as big as possible, and then use a screen recording tool to record the view. Even then, the audio did not sound like it did in the room.

You can record from your headset, but then it only records what you see, which is not ideal and can be nauseating to watch, like a really bumpy camera.

Breakout Rooms

It’d be nice if you could select which people you want to be grouped with and mute everyone else. Maybe it could also let you see each other’s whiteboards.

Multiple Whiteboards

As far as I can tell, only one whiteboard can be active at a time. It would be nice if each people could have their own whiteboard and then we could switch to that whiteboard to share what they did.

Thank you to

Karen,

Brittany,

Rob,

Mike,

Jessie, and

Karl (“Dr.”) for going out of your way to explore this with me. I really appreciate it!

Overall, this was quite an experience and it gave us a glimpse into what is possible. I still prefer being in person, but there are parts of this experience that are much better for Zoom, especially if it’s just two or three people (like in tutoring). Years from now there will be headsets that capture facial expressions, longer battery life, and better resolution and this is going to be amazing.

What do you think? Please let me know your thoughts in the comments. What are you excited about? What questions do you still have? What parts could I better explain?

Fascinating. I found the chatter a bit unnerving which I also found curious- because the same six people chatting around a conference table in a similar situation but in ‘real life’ wouldn’t have been as unnerving to me. It would be great, as you say to be able to mute people- even better to be able to ‘turn down the volume’ on specific people/voices.

The limitation in communication from not having facial expressions cannot be underestimated. I think when Meta figures that out someday (they will) it will represent a huge leap in communicating via the metaverse.

Thanks Jeff. When using the headset, it wasn’t quite as unnerving because the volumes changed more closely to how they would in real life. The recording didn’t capture that, for some reason.

I heard that there is a new kind of headset (expensive!) coming out that will have facial expression capturing. That will be neat to see incorporated.